Summary:

Unsure about classification vs. clustering? Classification sorts data into existing categories, like spam filtering or image recognition. Clustering uncovers hidden groups, useful for customer segmentation or anomaly detection. Both techniques power data science! Learn when to use each to unlock the secrets within your data.

Machine Learning is a subset of Artificial Intelligence and Computer Science that uses data and algorithms to imitate human learning and improve accuracy. Being an important component of Data Science, the use of statistical methods is crucial in training algorithms to make classifications.

Certainly, these predictions and classifications help in uncovering valuable insights in data mining projects. ML algorithms fall into various categories, generally characterised as Regression, Clustering, and Classification.

While Classification is an example of a directed Machine Learning technique, Clustering is an unsupervised Machine Learning algorithm. The blog will take you on a journey to learn more about these algorithms and unfold a comparison of Classification vs. Clustering.

What is Classification?

Classification is a directed approach in Machine Learning that assists organisations in making predictions of the target values based on the input provided to the models.

There are various types of classifications, including binary and multi-class classifications, amongst many others.

These are dependent on the number of classes included within the target values. The different types of classification can be further evaluated as follows:

Logistic Regression

It is the kind of Linear model used in the classification process. If you need to determine the likelihood of an event, applying the sigmoid function is important. Categorical values in classification require the application of logistic regression, which is one of the best approaches.

K-Nearest Neighbours (KNN)

The distance between one data point and every other accomplished parameter is calculated using distance metrics like Euclidean distance, Manhattan distance, and others.

Therefore, to categorise the output correctly, you need a vote with a simple majority from each data’s K closest neighbours, which is highly important.

Decision Trees

Decision Trees are non-linear models, unlike logistic regression, which is a linear model. Using a tree structure is helpful in constructing the classification model, which includes nodes and leaves.

Several if-else statements are used in this method to break down a large structure into various smaller ones. This is then used to produce the final result. In both regression and classification issues, the use of decision trees can be highly helpful.

Random Forest

The use of multiple decision trees in an ensemble learning approach can be beneficial in predicting the results of large attributes. Consequently, each brand of the decision tree will yield a distinct result.

Multiple decision trees are crucial for categorising the final conclusion in the classification problem. Regression problems are further solved by calculating the average of the projected values from the decision trees.

Naïve Bayes

Bayes’ Theorem works as the foundation for the method of classification. It essentially works on the assumption that the presence of one feature does not rely on the presence of other characteristics. Alternatively, there is no connection between the two of them.

Therefore, the result of this supposition evaluates that it does not perform quite well with complicated data. The main reason is that the majority of the data sets have some type of connection between the characteristics. Hence, the assumption causes a problem.

Support Vector Machine

The classification algorithm makes use of a multidimensional representation of the data points. Hyperplanes are useful in separating the data points into groups. It is used to show an n-dimensional domain for the n available features and helps create hyperplanes for splitting the pieces of data with the greatest margin.

Application of Classification

Classification, another fundamental technique in Data Science, complements clustering by focusing on sorting data points into predefined categories. Here’s how classification finds uses across various fields:

Email Spam Filtering

Classification algorithms are the backbone of spam filters. They analyze emails and classify them as spam or inbox based on features like keywords, sender information, and content structure.

Image Recognition

Image recognition relies on classification models to categorize images into different classes, such as identifying objects (cars, people, furniture) or scenes (beaches, mountains, cityscapes) within an image.

Sentiment Analysis

Social media analysis and marketing research leverage classification to understand the sentiment behind text data. This helps categorize opinions and reviews as positive, negative, or neutral.

Fraud Detection

In finance and e-commerce, classification algorithms analyze transactions to identify fraudulent activities. They can flag suspicious spending patterns or account behaviour that deviates from normal.

Medical Diagnosis

While not a replacement for medical expertise, classification models can be trained on medical data to assist doctors. They can analyze patient information, scans, and test results to predict potential diseases or classify risk factors.

Loan Approval

Banks use classification models to assess loan applications. The models can categorize applicants as high-risk, low-risk, or somewhere in between by analysing factors like income, credit history, and debt-to-income ratio.

These are just a few examples, and classification algorithms play a vital role in various applications like weather forecasting, self-driving cars, and even algorithmic trading in finance.

What is Clustering?

Clustering refers to the Machine Learning technique that belongs to the category of unsupervised learning. The purpose of the algorithm is to create clusters out of the collections of data points that have certain effective properties.

The data points certainly belong to the data points of a certain cluster that have similar characteristics. On the other hand, data points belonging to other clusters must have distinct characteristics from one another as humanely as possible. Two different categories of clustering make up the entire concept: soft clustering and hard clustering.

K-Means Clustering

The beginning of establishing a fixed set of K segments and then using the distance metrics to compute the distance that separates each data item from the cluster centres of the various segments is the K-Means Clustering.

Accordingly, it places each data point with each k group based on how far apart from the other points it is.

Agglomerative Hierarchical Clustering

The formation of a cluster is possible by merging data points based on the distance metrics and the criteria which is useful for connecting these clusters.

Divisive Hierarchical Clustering

It begins with the data sets combined into one single cluster, and then it divides these datasets using the proximity of the metrics together with the criterion. Both the hierarchical clustering and contentious clustering methods are seen as dendrograms. It can also be used to determine the optimal number of clusters.

DBSCAN

This approach in the clustering algorithm is based on the density whereby some algorithms like K-Means perform well on clusters with a reasonable amount of space between them. It further produces clusters that have spherical images or shapes as well.

DBSCAN is used when the form of input is arbitrary, although it is less suspected to be aberrations than other scanning techniques. Within a given radius, it brings together various datasets which are adjacent to a large number of other datasets.

OPTICS

Density-based clustering, like the DBSCAN, makes use of the OPTICS strategy along with a few other factors, and it has a far greater computational burden. A reachability plot is created, but it does not break the data into clusters, which can aid with understanding clustering.

BIRCH

In terms of organising the data into different groups, it is important to summarise it first. Consequently, it first summarises the data and then uses the summation to form clusters. However, it is important to focus on the fact that it is limited to only working with numerical properties that have the ability to express spatially.

Application of Clustering

Clustering is a powerful technique used in various fields to uncover hidden patterns and structures within unlabeled data. These are just a few examples, and clustering applications continue to grow in various domains.

Customer Segmentation

Businesses use clustering to group customers based on purchase history, demographics, or browsing behaviour. This helps them target marketing campaigns and promotions more effectively.

Recommendation Systems

Clustering algorithms are used to identify users with similar preferences. This allows recommendation systems to suggest products, movies, or music a user might be interested in.

Image Segmentation

In image processing, clustering helps segment images into meaningful regions, such as separating foreground objects from the background. This is useful for tasks like object recognition and image editing.

Social Network Analysis

Researchers can identify communities with similar interests or behaviours by clustering users on social networks. This can provide insights into social trends and how information propagates.

Scientific Discovery

In biology, clustering helps classify genes or proteins based on their functions. In astronomy, it is used to group galaxies based on their properties.

Anomaly Detection

Clustering can be used to identify data points that fall outside of expected clusters. This can be useful for fraud detection in financial transactions or identifying unusual system activity in network security.

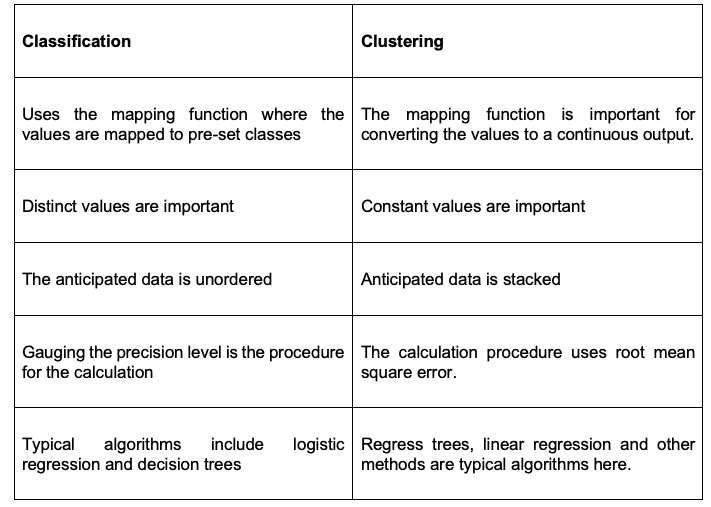

Difference Between Classification and Clustering

Frequently Asked Questions

What is The Relationship Between Clustering and Classification in Machine Learning?

Classification and Clustering are both Machine Learning algorithms that are used for different purposes. The classification algorithm is a supervised Machine Learning algorithm used to predict the different categories of target values.

In the case of clustering, it is an unsupervised Machine Learning algorithm that creates clusters out of the collection of data points that include certain effective properties.

What is Hard and Soft Clustering in Machine Learning?

Hard and soft clustering are the two clustering methods in Machine Learning. Soft Clustering is about the output providing a probability likelihood of data points belonging to each of the pre-defined number of clusters. On the other hand, hard clustering focuses on one data point which can belong to one cluster only.

Why Do We Need Clustering?

Data Scientists and others use clustering to gain important insights on clusters of data points by observing them and applying the clustering algorithm to the data. Clustering is required to identify groups of similar objects within a dataset with two or more variable quantities.

Conclusion

Thus, Classification and Clustering are two of the most efficient Machine Learning techniques useful in enhancing business processes. The difference mentioned above of Classification vs. Clustering proves that you can use them differently to understand your customers and improve their experiences.

By analysing and targeting consumers using ML Techniques, businesses can create a loyal customer base and optimise their Return on Investment.